Tag: Caching

-

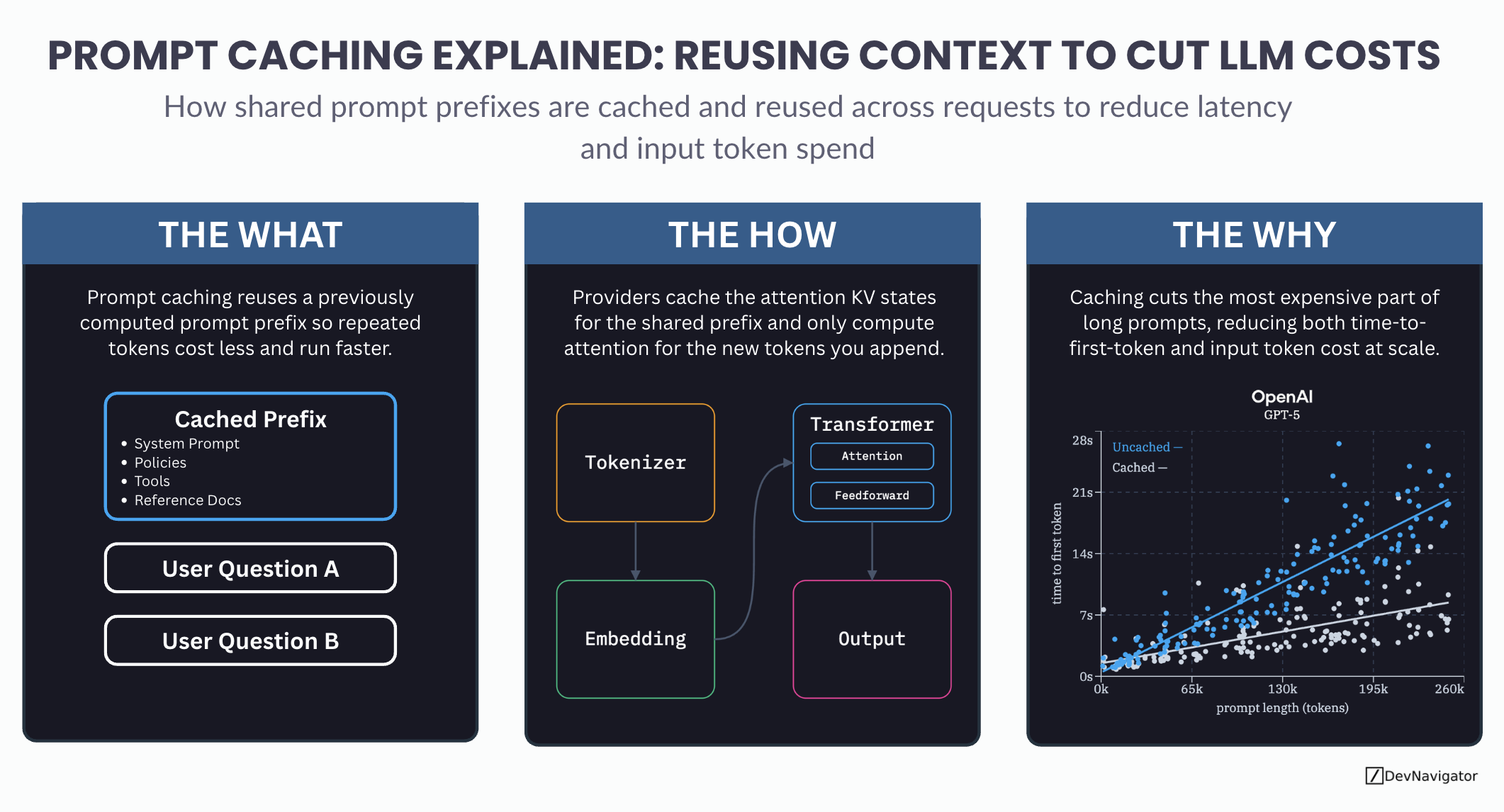

Prompt Caching Explained: A Smarter Method for Reusing Context to Cut LLM Costs

Prompt caching is one of the most important cost and performance optimizations quietly shaping modern LLM applications. As teams scale agents, RAG pipelines, and long-context workflows, the same large prompt…