Tag: Prompt Engineering

-

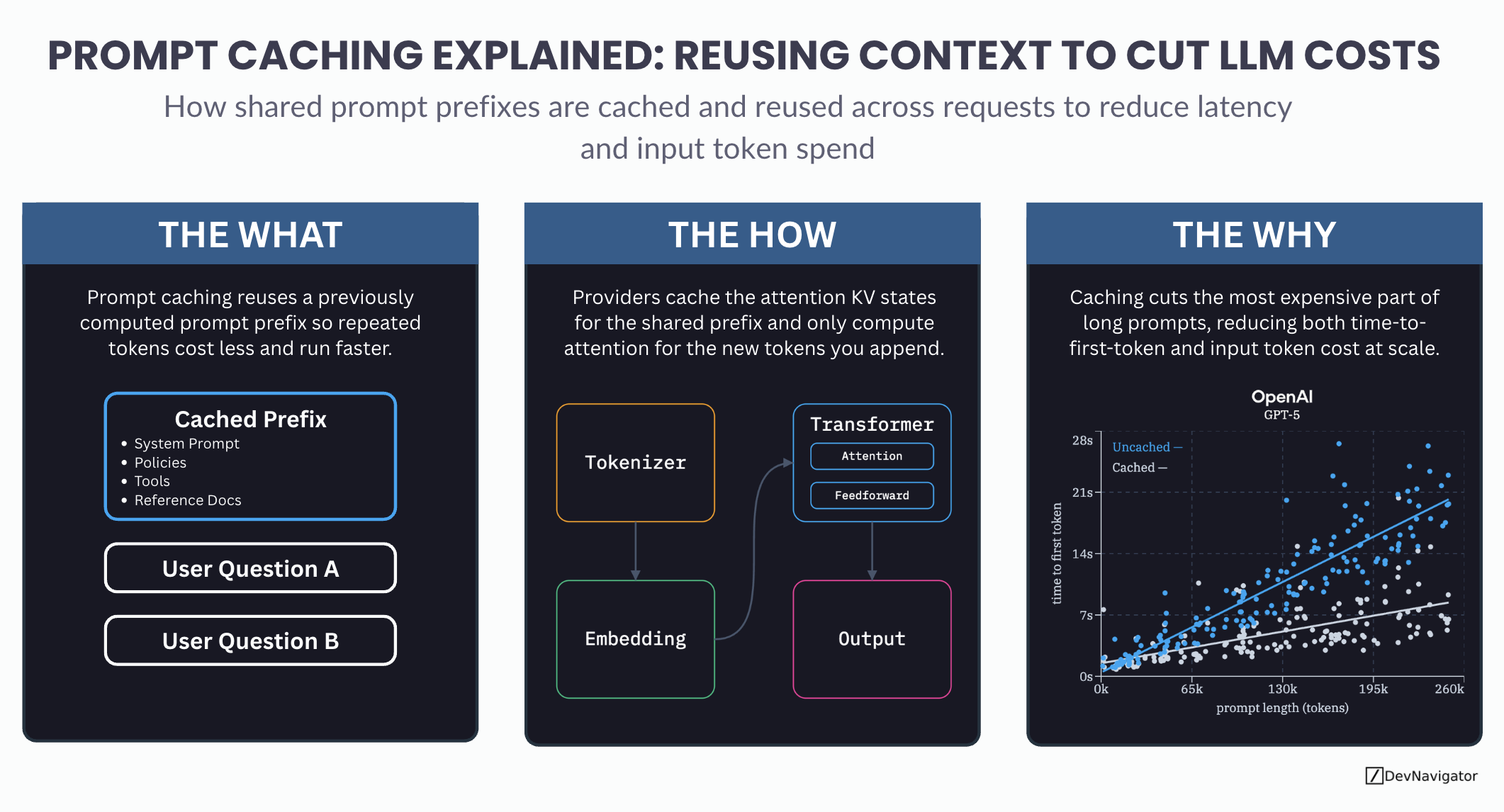

Prompt Caching Explained: A Smarter Method for Reusing Context to Cut LLM Costs

Prompt caching is one of the most important cost and performance optimizations quietly shaping modern LLM applications. As teams scale agents, RAG pipelines, and long-context workflows, the same large prompt…

-

The 5 Prompt Engineering Best Practices to Improve AI Responses

Executive Takeaways Expanded Insights Most people assume AI performance is determined solely by the model itself, but in practice, the quality of the prompt often matters just as much, if…

-

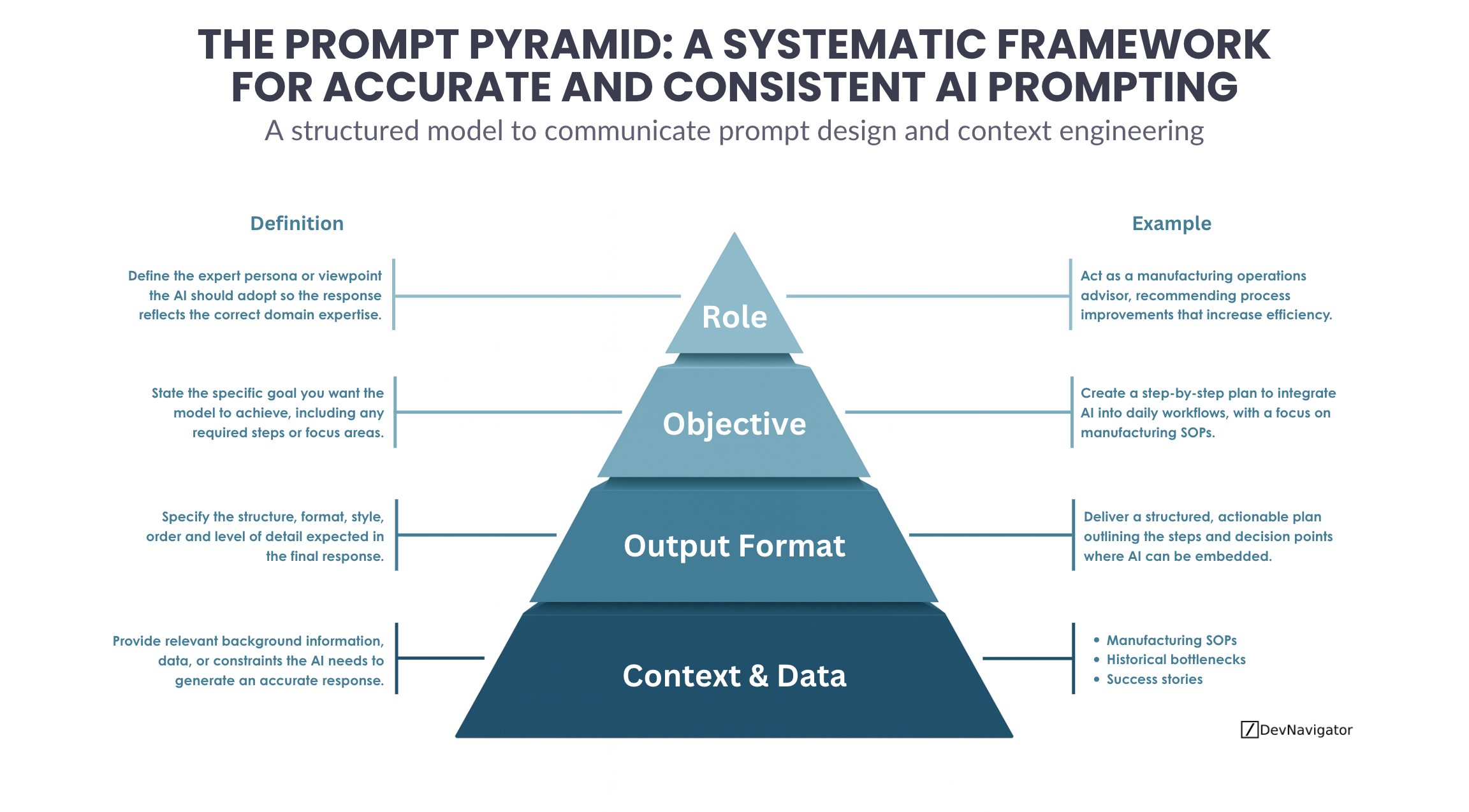

The Prompt Pyramid: A 4-Layer Framework for more Accurate and Consistent AI Prompting

The Prompt Pyramid provides a practical, repeatable framework for designing AI prompts that consistently produce accurate, relevant, and actionable results. As organizations increasingly rely on AI for analysis, decision support,…