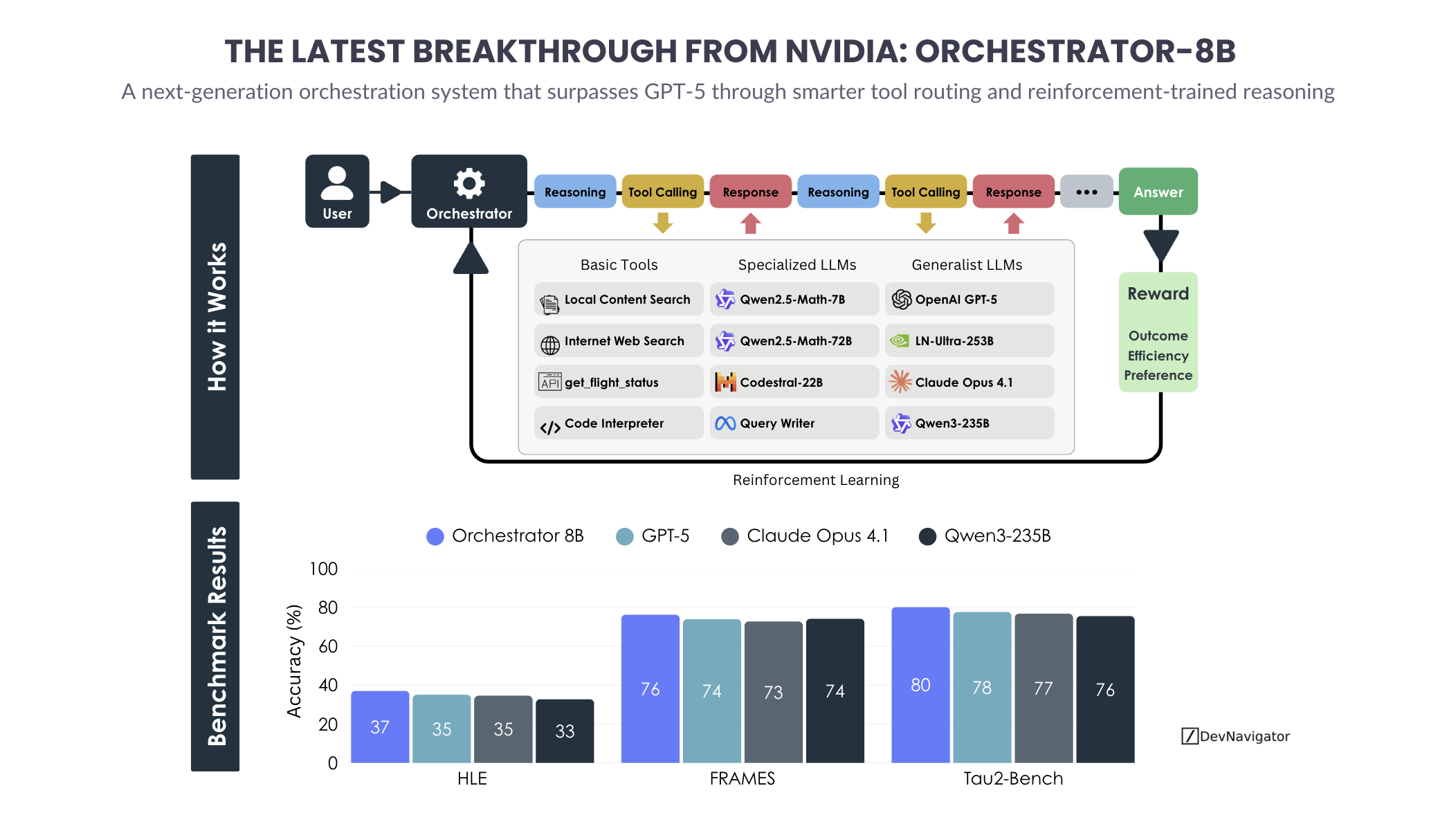

Artificial intelligence is entering a phase where raw model size matters less than how intelligence is coordinated. The rise of the AI Orchestrator reflects this shift clearly. NVIDIA’s Orchestrator-8B demonstrates that smaller, well-directed systems can outperform frontier models like GPT-5 by combining reasoning, tool use, and reinforcement learning in a structured way that mirrors how humans actually solve problems.

Executive Takeaways

- Orchestrated systems outperform standalone models: NVIDIA’s Orchestrator-8B achieves higher accuracy across HLE, FRAMES, and Tau-Bench than GPT-5, despite being far smaller.

- Tool-driven reasoning is now a competitive advantage: Blending retrieval, code execution, and specialist models delivers better results than relying on a single generalist LLM.

- Efficiency has become a core design goal: Accuracy alone is no longer enough. Cost, latency, and scalability now define real-world AI success.

Expanded Insights

The AI Orchestrator as a New System Pattern

The AI Orchestrator represents a clear break from the assumption that intelligence must live inside one massive model. Instead, the Orchestrator acts as a decision-making layer that routes tasks to the most appropriate resource. Simple queries may rely on local search or lightweight reasoning. Mathematical problems are delegated to math-specialized models. Code execution is handled by interpreters rather than language alone.

This approach mirrors real professional workflows. A data scientist does not reason, search the web, and compute statistics using the same mental process. Each task is handled by a different tool or skill. The Orchestrator formalizes this behavior and learns how to sequence it efficiently through reinforcement learning.

Reinforcement Learning Beyond Correctness

A key insight from NVIDIA’s design is that reinforcement learning optimizes more than just accuracy. The reward signal incorporates outcome quality, efficiency, and user preference. This means the system learns when to stop early, when to escalate to a stronger model, and when a cheaper tool is sufficient.

In practice, this produces a system that behaves strategically rather than exhaustively. Instead of throwing maximum compute at every problem, the Orchestrator adapts its depth of reasoning to the task. This is a major departure from the brute-force tendencies of frontier models and a critical step toward sustainable AI deployment.

Benchmark Performance Tells a Deeper Story

The benchmark results highlight why orchestration matters. On HLE, FRAMES, and Tau-Bench, Orchestrator-8B consistently outperforms GPT-5, Claude Opus 4.1, and Qwen3-235B. What makes this notable is not just the accuracy gains, but how they are achieved.

Rather than relying on a single reasoning pass, the Orchestrator cycles through reasoning, tool calls, and validation steps. Each iteration is informed by previous outcomes. The result is higher reliability in multi-step tasks where traditional LLMs tend to drift or hallucinate.

Cost and Latency as Strategic Advantages

Perhaps the most consequential implication is economic. Frontier models are powerful but expensive, especially in workflows that require multiple calls per task. The Orchestrator minimizes this cost by reserving premium models for moments where they add the most value.

This architecture enables 70 to 80 percent reductions in cost and latency while improving accuracy. For enterprises, this changes the feasibility of AI adoption. Use cases that were previously too expensive at scale, such as continuous monitoring, complex decision support, or large workflow automation, become practical.

What This Means for Builders and Leaders

The success of the AI Orchestrator signals a broader transition in how AI systems should be designed. Intelligence is no longer about choosing the biggest model. It is about designing systems that reason, act, and adapt efficiently.

For builders, this means focusing on system architecture rather than model selection alone. For leaders, it reframes AI investment decisions around scalability and operational value, not just benchmark prestige. NVIDIA’s Orchestrator-8B shows that the future of AI belongs to systems that think less like monoliths and more like coordinated teams.

Source: https://arxiv.org/pdf/2511.21689