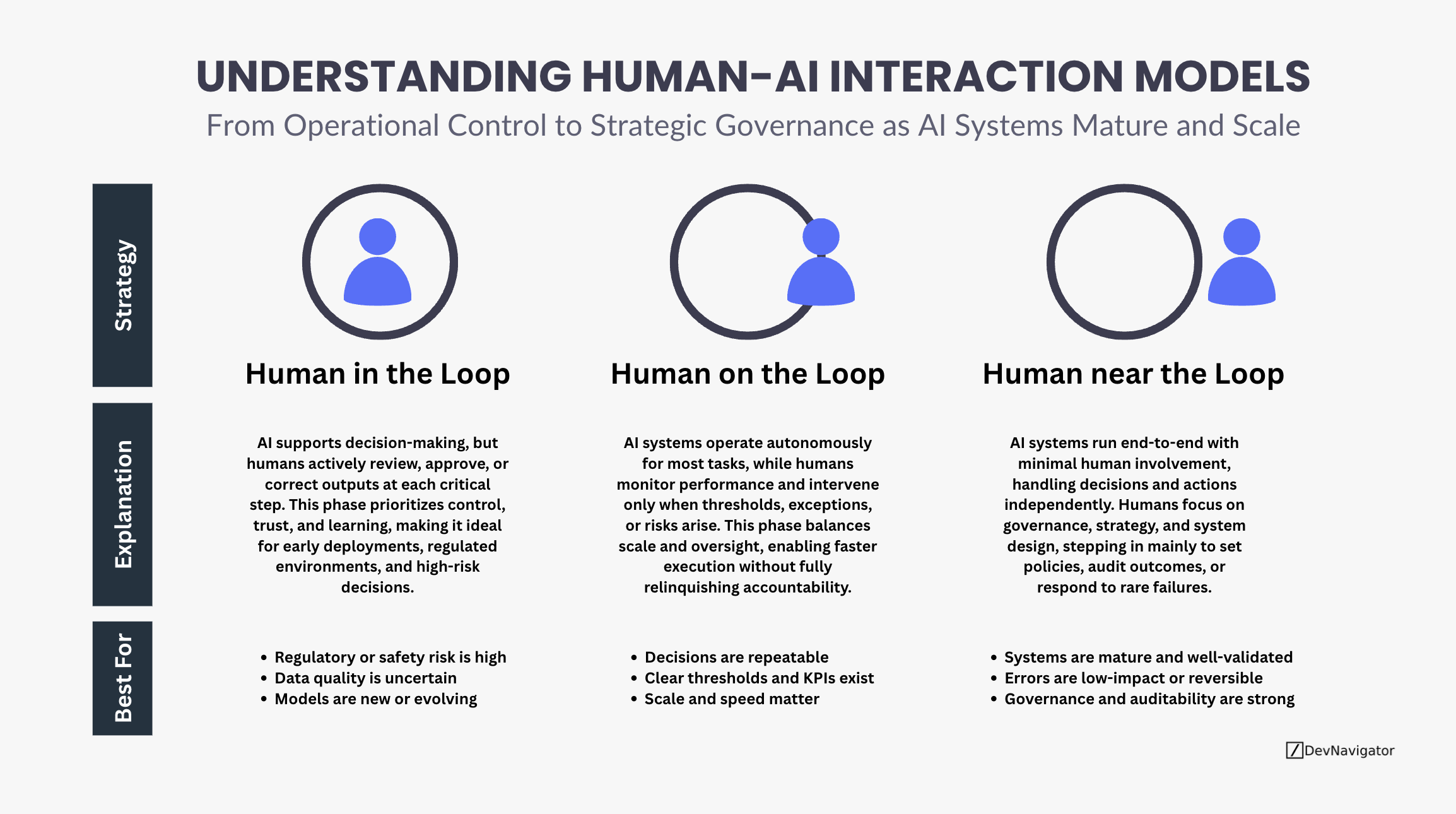

As artificial intelligence systems move from experimental tools to core operational infrastructure, the Human-AI model is undergoing a fundamental shift. Early AI deployments required constant human supervision, while modern systems increasingly operate autonomously at scale. Understanding where humans sit in the loop is no longer a technical nuance. It is a strategic decision that affects risk, accountability, speed, and trust. This evolution can be understood through three distinct but often overlapping modes of human involvement.

Executive Takeaways

- Human involvement in AI does not disappear as systems mature; it shifts from direct decision-making to governance, oversight, and system design.

- Most organizations operate across multiple modes simultaneously, aligning Human-AI with risk, maturity, and business criticality.

- The goal is not full autonomy, but earned autonomy, supported by strong controls, monitoring, and accountability structures.

Expanded Insights

Human in the Loop: Control, Trust, and Learning

In the earliest and highest-risk phases of AI adoption, humans remain directly embedded in decision workflows. AI supports analysis and recommendations, but humans actively review, approve, or correct outputs at each critical step. This approach prioritizes control and learning over speed, and represents a strong starting pint for Human-AI collaboration

Human-in-the-loop systems are essential when regulatory or safety risks are high, data quality is uncertain, or models are still evolving. In regulated industries such as healthcare, pharmaceuticals, or finance, this mode ensures that accountability remains clearly defined while organizations build confidence in model behavior. Importantly, this phase is not a failure of automation; it is a necessary foundation for trust and model maturity.

Human on the Loop: Scaling with Oversight

As AI systems prove reliable and decisions become repeatable, organizations move toward a human-on-the-loop model. Here, AI systems operate autonomously for most tasks, while humans monitor performance and intervene only when predefined thresholds, exceptions, or risks arise.

This mode balances speed and scale with accountability. Humans are no longer approving every decision, but they remain responsible for outcomes. Clear KPIs, alerting mechanisms, and escalation paths become critical. Human-on-the-loop is often the target operating model for enterprise AI, enabling significant efficiency gains without relinquishing control. It reflects a shift from managing individual decisions to managing system behavior.

Human near the Loop: Governance over Execution

In the most mature deployments, AI systems run end-to-end with minimal human involvement in day-to-day operations. Humans focus on governance, strategy, and system design rather than execution. Intervention is rare and typically limited to audits, policy updates, or responses to unexpected failures.

This mode is appropriate only when systems are well-validated, errors are low-impact or reversible, and governance and auditability are strong. Human accountability does not disappear; it moves upstream. Responsibility lies in setting the right policies, defining acceptable risk, and ensuring transparency. Human-near-the-loop systems represent not unchecked automation, but disciplined autonomy earned through rigorous controls. This Human-AI mindset will be critical as systems progress over time.

A Practical Perspective

These three modes are not a linear maturity ladder where one permanently replaces the others. Most organizations operate all three simultaneously across different use cases. High-risk decisions may remain human-in-the-loop, operational workflows may run human-on-the-loop, and low-risk, high-volume processes may approach human-near-the-loop.

The strategic challenge is aligning the right level of human involvement with the right level of risk, maturity, and business impact. Organizations that succeed do not chase autonomy for its own sake. They design systems where humans remain accountable, even as AI takes on greater responsibility.

Closing Thought

The evolution of Human-AI models is not about removing humans from the equation. It is about redefining where humans create the most value. As AI systems mature and scale, the human role shifts from operator to overseer, and ultimately to governor. Organizations that recognize and design for this shift will be best positioned to scale AI responsibly and sustainably.