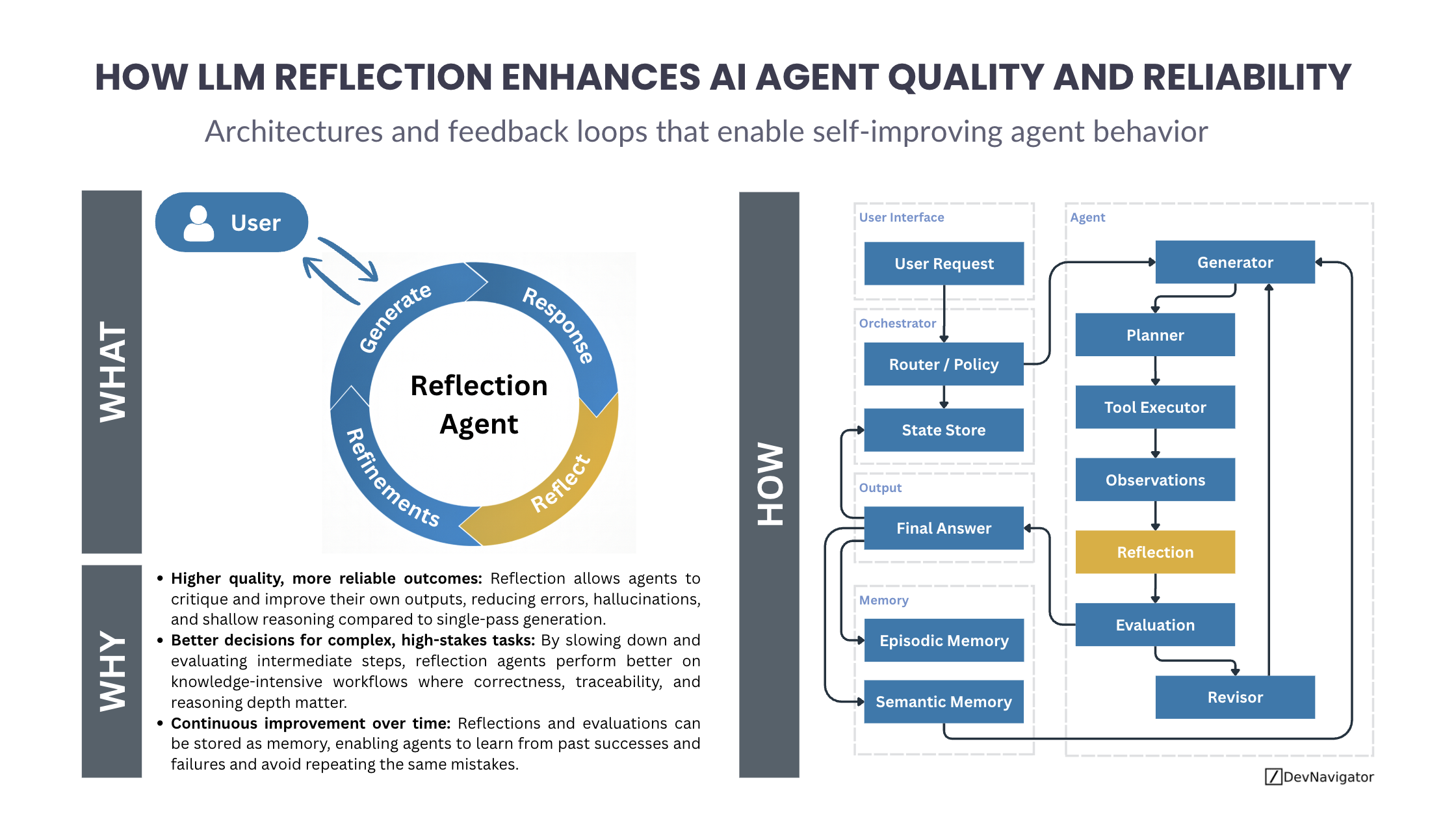

As AI agents move from simple chat interfaces to autonomous systems that plan, act, and decide, a critical limitation becomes clear: single-pass generation is not enough. Many failures in AI agents stem not from lack of capability, but from lack of self-evaluation. This is where LLM reflection plays a defining role. By enabling agents to critique, evaluate, and refine their own outputs, reflection transforms agents from fast responders into more reliable decision-makers.

Executive Takeaways

- LLM reflection improves output quality by allowing agents to critique and refine responses before final delivery.

- Reflection enables deeper reasoning for complex, high-stakes tasks where correctness and traceability matter.

- Reflection combined with memory creates learning systems that improve over time instead of repeating mistakes.

Expanded Insights

What Is LLM Reflection in AI Agents?

LLM reflection is a design pattern where an agent evaluates its own intermediate or final outputs using structured prompts or dedicated reflection steps. Instead of immediately returning the first generated answer, the agent pauses to ask questions such as: Is this correct? What is missing? What assumptions were made? This mirrors human review and transforms reactive, System-1-style behavior into slower, more deliberate reasoning.

In practice, reflection is implemented as a loop. The agent generates an initial response, reflects on its quality, identifies gaps or errors, and produces refinements. This iterative cycle is lightweight in concept but powerful in impact.

Why Reflection Improves Agent Quality

Many AI agent failures are not catastrophic errors, but subtle ones: hallucinated facts, shallow reasoning, or incomplete answers. While prompt engineering can help offset errors, reflection too can directly target these weaknesses. By explicitly critiquing its own outputs, an agent can reduce hallucinations, catch logical inconsistencies, and improve clarity.

This becomes especially valuable in domains such as research, analytics, software development, and regulated environments, where “mostly correct” is not good enough. Reflection increases confidence not by making models smarter, but by making them more self-aware of their limitations.

How Reflection Fits into Agent Architectures

Modern agent architectures separate responsibilities into distinct components: generation, planning, tool execution, observation, reflection, evaluation, and revision. Reflection sits downstream of action and observation, acting as a quality gate before answers are finalized.

In a typical flow, a generator produces an answer or plan. Tools may be executed to gather data. Observations are collected. The reflection component then critiques the result, often focusing on accuracy, completeness, or alignment with the original goal. An evaluation step determines whether the output is good enough or needs revision. If not, the agent revises and loops again.

This structure ensures that agents do not blindly proceed forward after a mistake, a common failure mode in purely linear agent designs.

Reflection and Memory: From Iteration to Learning

Reflection becomes even more powerful when paired with memory. Episodic memory can store successful and failed trajectories, while semantic memory captures generalized insights. Over time, this allows agents to avoid repeating the same errors and reuse patterns that worked well in the past.

Rather than improving only within a single session, reflection plus memory enables continuous improvement across tasks. This shifts agents from disposable tools into systems that accumulate experience, a key requirement for long-running or enterprise-grade AI applications.

When Reflection Is Worth the Cost

Reflection introduces additional computation and latency. It is not appropriate for every use case. However, for knowledge-intensive, high-stakes, or decision-driven workflows, the tradeoff is often worth it. When quality, trust, and reasoning depth matter more than raw speed, reflection becomes a foundational capability rather than an optional enhancement.

Final Thoughts

LLM reflection is not a model upgrade, but an architectural one. It changes how agents behave, how they recover from mistakes, and how they improve over time. As AI agents become more autonomous and embedded in real-world systems, reflection will be one of the key mechanisms that separates unreliable automation from trustworthy AI.

Reflection does not make agents perfect. It makes them better, safer, and more accountable — and that is exactly what modern AI systems need.