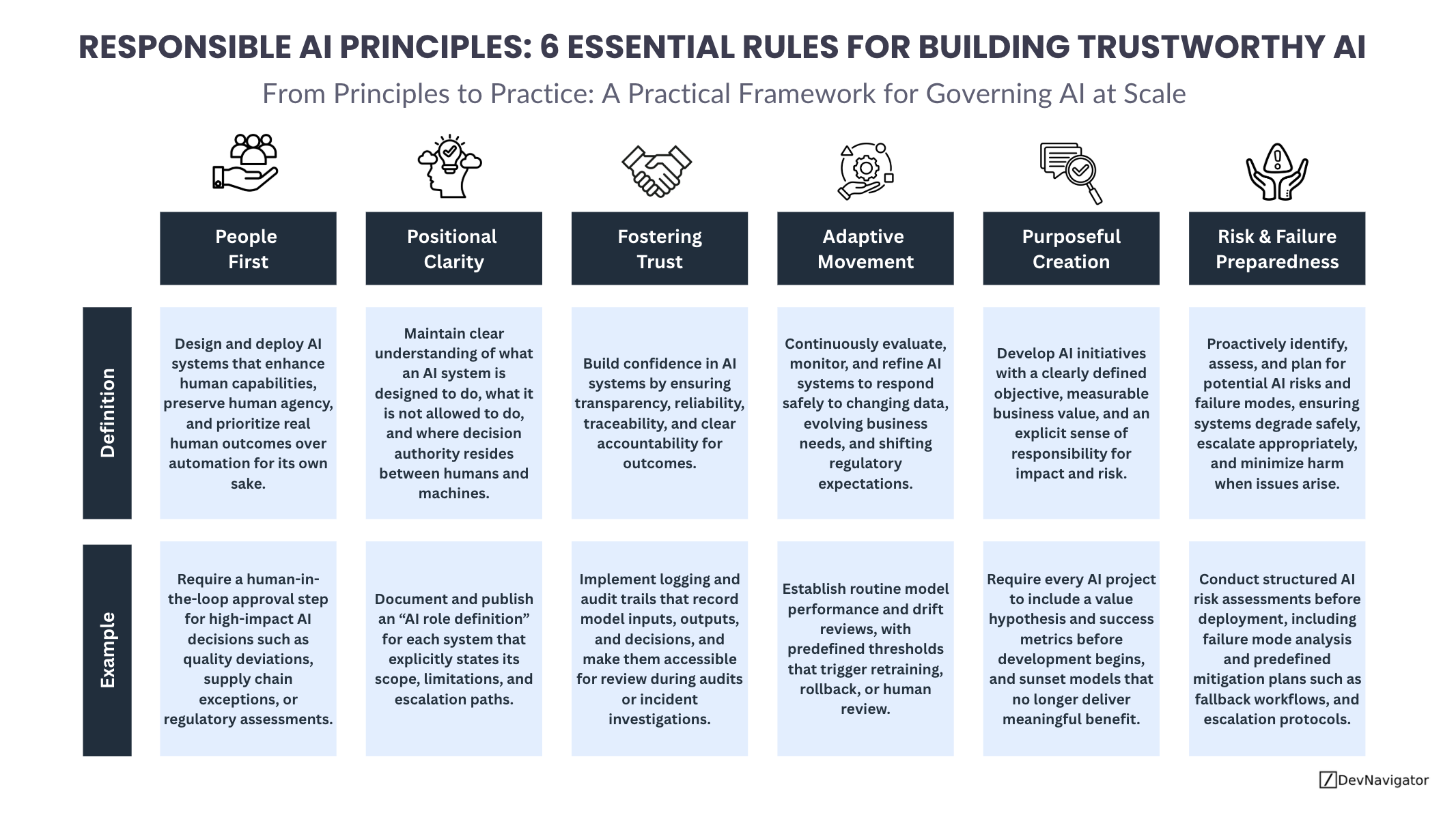

Responsible AI Principles are no longer abstract ideals reserved for policy documents or ethics boards. As artificial intelligence becomes embedded in everyday business decisions, these principles must translate into concrete actions that shape how systems are designed, deployed, monitored, and governed. This article outlines six Responsible AI Principles that help organizations move from intention to execution, balancing innovation with accountability, trust, and resilience. Together, they provide a practical framework for building AI systems that deliver value while managing risk in real operational environments.

Table of Contents

Executive Takeaways

- Responsible AI Principles must move beyond ethics statements and become embedded in day to day system design, governance, and operations.

- Clear ownership, transparency, and adaptability are just as critical as model performance when deploying AI at scale.

- Organizations that operationalize Responsible AI Principles are better positioned to build trust, meet regulatory expectations, and sustain long term value.

Expanded Insights

People First

At the core of Responsible AI Principles is a simple truth: AI exists to serve people, not replace them indiscriminately. Teams should develop systems that enhance human capabilities, preserve agency, and improve outcomes that matter in the real world. When AI is treated as a decision maker rather than a decision support tool, organizations risk eroding trust and accountability. Putting people first means designing workflows where humans remain responsible for high impact decisions and where AI augments judgment rather than overriding it. This principle is especially critical in regulated, safety sensitive, or ethically complex environments.

Positional Clarity

One of the most common failures in AI adoption is confusion about what a system is actually allowed to do. Responsible AI Principles require positional clarity so that everyone understands the role of AI within a process. This includes defining what the system is designed to do, what it must not do, and where decision authority ultimately resides. Without this clarity, AI outputs can be misinterpreted as authoritative rather than advisory, leading to unintended consequences. Clear role definitions also make escalation paths and accountability easier to enforce.

Fostering Trust

Trust is earned through consistent behavior, not promises. Responsible AI Principles emphasize transparency, reliability, traceability, and accountability as foundational requirements. Stakeholders must be able to understand how decisions are made, verify outcomes, and investigate issues when they arise. Logging, audit trails, and performance monitoring are not optional features. They are essential mechanisms for building confidence across technical teams, leadership, regulators, and end users. When trust breaks down, even high performing models quickly lose organizational support.

Adaptive Movement

AI systems do not operate in static environments. Data changes, business priorities evolve, and regulatory expectations shift. Responsible AI Principles therefore require continuous evaluation and refinement. Adaptive movement means monitoring model performance, detecting drift, and responding with controlled updates rather than reactive fixes. It also means knowing when not to adapt automatically and when to pause, escalate, or involve human review. Organizations that treat AI as a living system rather than a one time deployment are far more resilient over time.

Purposeful Creation

Not every problem requires AI, and not every AI project deserves to live forever. Purposeful creation ensures that Responsible AI Principles remain tied to real business value. Every AI initiative should start with a clearly defined objective, measurable success criteria, and an explicit understanding of potential impact and risk. Just as important, organizations must be willing to sunset systems that no longer deliver meaningful benefit. Purpose provides the justification for investment and the discipline to stop when value fades.

Risk and Failure Preparedness

Even well designed AI systems can fail. Responsible AI Principles demand proactive planning for those moments. Risk and failure preparedness involves identifying potential failure modes, assessing their impact, and defining how systems should degrade safely when things go wrong. This includes fallback workflows, escalation protocols, and predefined mitigation plans. Preparedness does not slow innovation. It enables organizations to move faster with confidence, knowing they can respond effectively when issues arise.

Closing Perspective

Responsible AI Principles are not constraints on innovation. They are enablers of sustainable progress. By grounding AI systems in human outcomes, clarity of purpose, operational trust, adaptability, and preparedness, organizations can scale AI responsibly while avoiding the common pitfalls that undermine adoption. As AI continues to move deeper into core business processes, these principles offer a practical foundation for building systems that are not only powerful, but dependable.