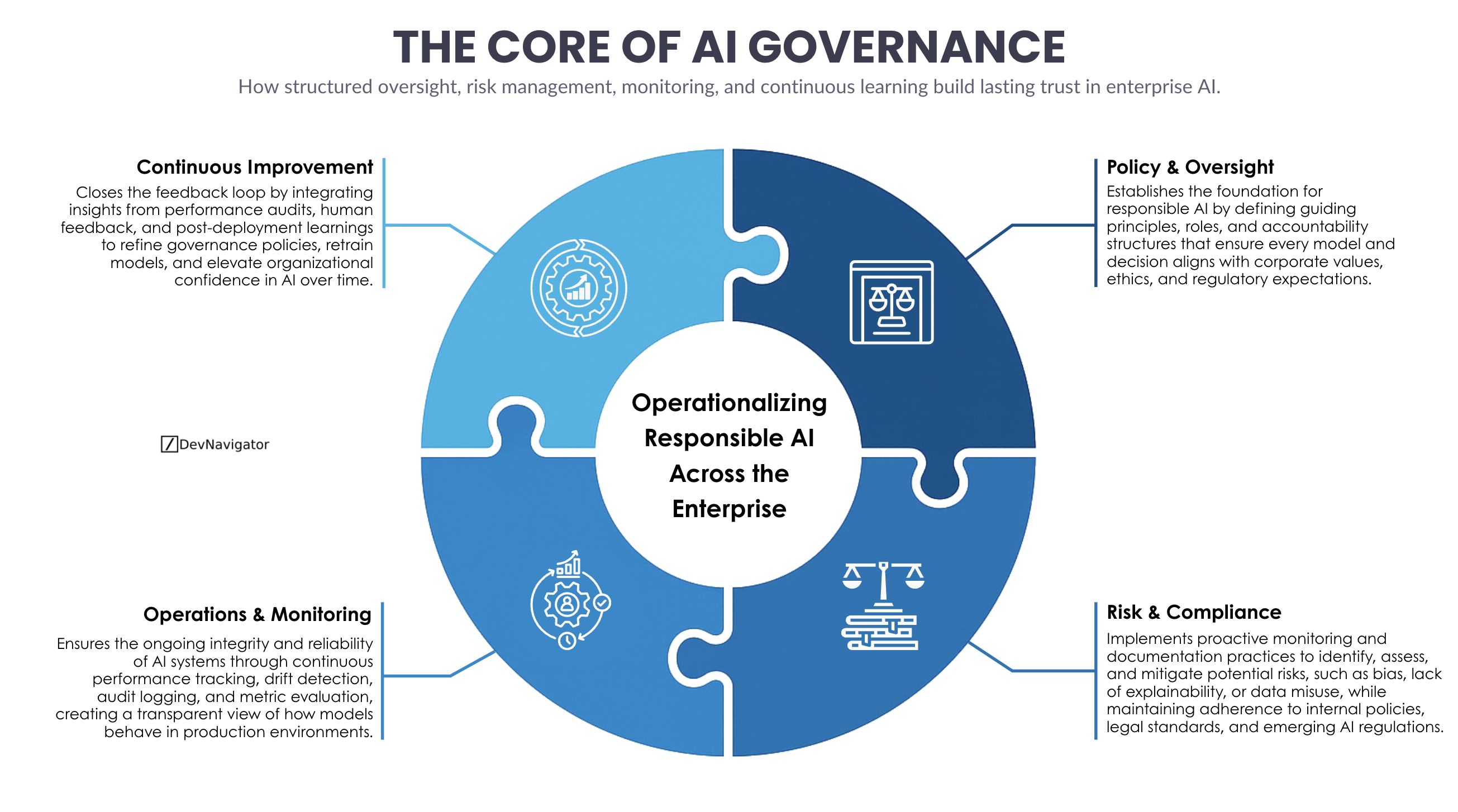

AI Governance has moved from a compliance checkbox to a defining capability for modern enterprises. As artificial intelligence systems increasingly influence decisions, workflows, and customer outcomes, organizations are realizing that trust is not created by models alone. It is created by structure. This article breaks down AI Governance into four practical pillars that allow enterprises to operationalize responsible AI at scale. Rather than treating governance as a blocker, this framework positions AI Governance as an enabler of speed, reliability, and long-term confidence across the business.

Table of Contents

Executive Takeaways

- AI Governance succeeds when it is operationalized, not when it lives only in policy documents or review boards.

- Trust in AI systems is built through continuous oversight, combining monitoring, risk controls, and human feedback loops.

- The most effective AI Governance programs evolve over time, using real production insights to improve both models and governance practices.

Expanded Insights

Policy and Oversight: The Foundation of AI Governance

At its core, AI Governance starts with clarity. Policy and oversight define what responsible AI means for an organization and how accountability is assigned. This includes establishing principles around fairness, transparency, security, and ethical use, but it also goes further by defining roles, approval paths, and decision rights. Strong AI Governance ensures that every model, whether experimental or production-grade, aligns with enterprise values and regulatory expectations.

Without this foundation, organizations often struggle with inconsistent practices across teams. One group may prioritize speed, another may over-index on risk avoidance, and neither achieves sustainable outcomes. Effective AI Governance harmonizes these tensions by setting guardrails that enable innovation while protecting the organization.

Risk and Compliance: Moving from Reactive to Proactive Control

Risk and compliance are where AI Governance becomes tangible. This pillar focuses on identifying and mitigating risks before they materialize in production. These risks include model bias, data drift, explainability gaps, privacy exposure, and regulatory noncompliance. Mature AI Governance programs embed risk assessments directly into the AI lifecycle rather than treating them as one-time checkpoints.

Proactive monitoring, structured documentation, and traceability are critical here. Enterprises operating in regulated environments, such as healthcare, finance, and life sciences, rely on AI Governance to demonstrate adherence to internal controls and external regulations. When done well, risk and compliance do not slow teams down. They reduce rework, prevent costly incidents, and build confidence with auditors and leadership.

Operations and Monitoring: Where AI Governance Becomes Real

AI Governance lives or dies in production. Operations and monitoring ensure that AI systems behave as expected after deployment. This includes performance tracking, drift detection, audit logging, and alerting mechanisms that surface issues early. Governance without operational visibility is governance in name only.

This pillar transforms AI Governance from a static framework into a living system. By continuously observing how models perform in real environments, organizations gain insight into reliability, robustness, and unintended consequences. These insights allow teams to intervene quickly, retrain models when needed, and maintain trust with users and stakeholders.

Importantly, operations-focused AI Governance also creates transparency. Decision-makers can see how models are being used, how often they fail, and where improvements are required. This transparency is essential for scaling AI responsibly across the enterprise.

Continuous Improvement: Closing the AI Governance Loop

The final pillar is often the most overlooked, yet it is the most powerful. Continuous improvement ensures that AI Governance evolves alongside the organization’s AI maturity. Feedback from audits, monitoring systems, and human reviewers feeds directly into policy updates, model retraining strategies, and process refinements.

This learning loop is what separates fragile governance programs from resilient ones. As AI systems encounter new data, new use cases, and new risks, AI Governance must adapt. Continuous improvement allows enterprises to refine controls without starting from scratch, reinforcing confidence over time.

When organizations treat AI Governance as a continuous capability rather than a fixed rulebook, they unlock faster adoption, stronger alignment with business goals, and higher organizational trust in AI-driven decisions.

Why AI Governance Is a Strategic Advantage

AI Governance is no longer just about avoiding harm. It is about enabling scale. Enterprises that invest in robust AI Governance move faster because they spend less time firefighting and rebuilding trust. They deploy AI with confidence, knowing that oversight, monitoring, and improvement mechanisms are already in place.

As AI becomes embedded in core operations, AI Governance emerges as the quiet force that determines whether innovation compounds or collapses under its own weight. The organizations that get this right will not only comply with expectations. They will lead with confidence.