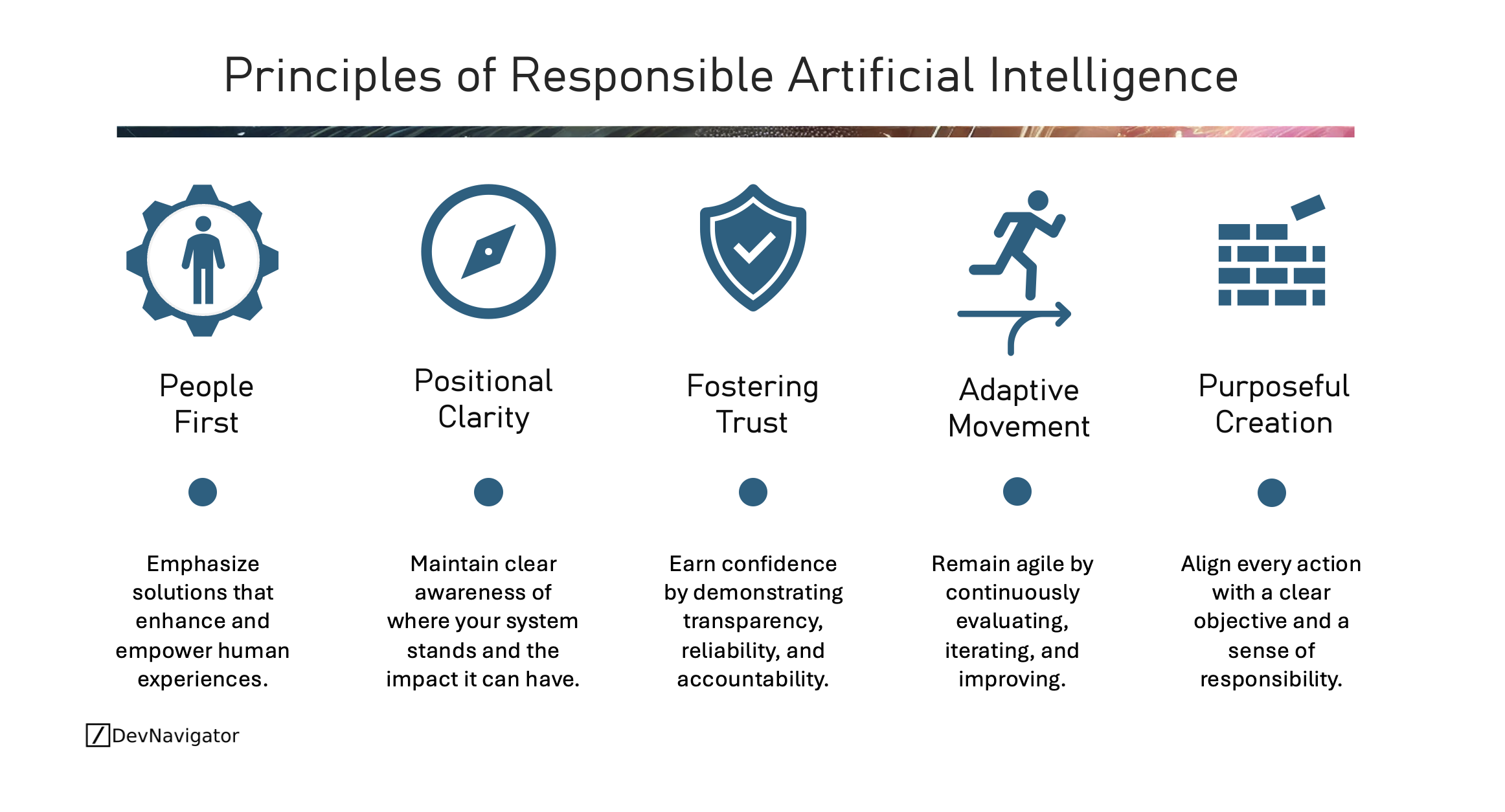

Responsible Artificial Intelligence is no longer a theoretical concept reserved for policy discussions. It is a practical necessity for organizations deploying AI at scale. As AI systems increasingly shape decisions in healthcare, finance, manufacturing, and public services, the stakes are rising. The five principles outlined here offer a grounded framework for implementing Responsible Artificial Intelligence in real environments. By focusing on People First design, Positional Clarity, Fostering Trust, Adaptive Movement, and Purposeful Creation, organizations can align innovation with accountability and long-term value.

Table of Contents

Executive Takeaways

- Responsible Artificial Intelligence begins with people, not models, and must prioritize human impact over technical novelty.

- Clear governance, transparency, and accountability mechanisms are essential to operationalize Responsible Artificial Intelligence at scale.

- Sustainable AI success depends on continuous evaluation, iteration, and alignment with clear strategic purpose.

Expanded Insights

People First: Designing Around Human Impact

At its core, Responsible Artificial Intelligence is about enhancing human capability rather than replacing it without oversight. AI systems influence hiring decisions, medical diagnoses, credit approvals, and supply chain operations. When these systems are not designed with human outcomes in mind, unintended harm can occur.

A People First approach requires proactive consideration of fairness, accessibility, usability, and impact. This includes testing for bias, ensuring diverse representation in training data where possible, and designing human-in-the-loop review mechanisms for high-risk decisions. Responsible Artificial Intelligence frameworks from organizations such as the OECD and NIST emphasize that AI should augment human judgment, not obscure or override it.

In practice, this means asking a simple question before deployment: who benefits, and who might be harmed?

Positional Clarity: Knowing Where You Stand

Responsible Artificial Intelligence demands situational awareness. Organizations must understand where their AI systems sit along a spectrum of risk and autonomy. A recommendation engine for content personalization carries different risk than a system guiding clinical decisions.

Positional Clarity involves documenting model capabilities, limitations, training data sources, and performance boundaries. It also requires clarity on regulatory obligations, especially in industries subject to compliance standards. In sectors like healthcare and finance, Responsible Artificial Intelligence must align with existing governance frameworks and audit expectations.

This principle reduces overconfidence. It ensures that decision-makers understand not just what an AI system can do, but what it cannot reliably do. Transparency documentation, model cards, and internal review boards are practical tools that reinforce Responsible Artificial Intelligence in this dimension.

Fostering Trust: Transparency, Reliability, Accountability

Trust is earned through evidence and consistency. Responsible Artificial Intelligence requires demonstrable reliability and clear accountability structures. When models fail, organizations must know why and who is responsible for remediation.

Transparency does not mean exposing proprietary algorithms. It means explaining system behavior in understandable terms, logging decisions where appropriate, and maintaining traceability. Techniques such as explainability methods, robust monitoring, and bias testing contribute to this goal.

Reliability also depends on validation under realistic conditions. Continuous monitoring for model drift, performance degradation, and unexpected behavior is essential. Responsible Artificial Intelligence is not achieved at launch. It is maintained through disciplined oversight.

Adaptive Movement: Continuous Evaluation and Improvement

AI systems operate in dynamic environments. Data distributions shift. User behavior changes. Regulations evolve. Responsible Artificial Intelligence therefore requires adaptive movement.

Organizations should establish regular review cycles to assess performance, fairness, and security. Feedback loops from users and stakeholders help surface blind spots. Iteration should be treated as a strength rather than an admission of weakness.

Adaptive Movement also means staying informed about evolving standards and legal frameworks. Responsible Artificial Intelligence is increasingly influenced by regional regulations, industry guidance, and emerging best practices. Remaining agile ensures compliance while preserving innovation capacity.

Purposeful Creation: Aligning AI with Clear Objectives

Not every problem requires AI. Responsible Artificial Intelligence begins with strategic intent. Systems should be deployed to address clearly defined objectives, not because AI is available or fashionable.

Purposeful Creation forces alignment between business goals, societal expectations, and ethical standards. It prevents the proliferation of poorly governed systems that add complexity without value. Responsible Artificial Intelligence is strongest when linked to measurable outcomes such as improved quality, reduced error rates, enhanced safety, or increased accessibility.

By anchoring AI initiatives to clear objectives, organizations reduce risk and increase accountability. Every deployment should answer the question: what responsible value does this create?