AI compliance is entering a new phase in 2026. What was once treated as a legal or security afterthought is now shaping how organizations design, deploy, and scale AI itself. As AI systems move closer to regulated decisions, financial reporting, and core operations, compliance expectations are converging across industries. This article breaks down AI compliance into five practical domains that reflect how AI is actually governed today. Rather than listing regulations in isolation, it presents a layered view of AI compliance that mirrors real enterprise workflows, highlighting where scrutiny is highest, where standards are still emerging, and why governance maturity has become a competitive advantage.

Table of Contents

Executive Takeaways

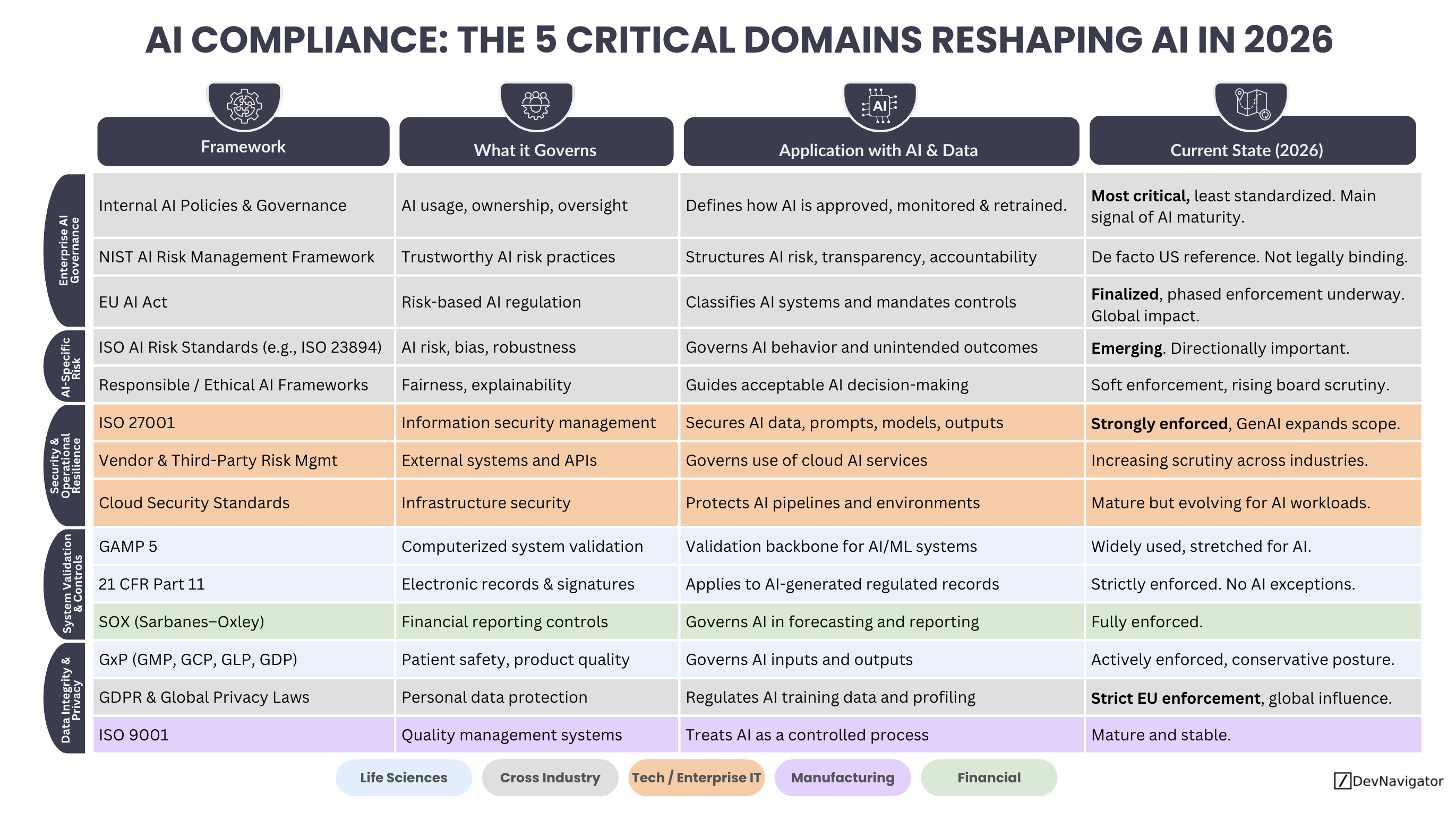

- AI compliance has become layered, spanning governance, risk, security, validation, and data integrity rather than living in a single regulation.

- Different industries face different pressure points, with life sciences, finance, and enterprise IT experiencing the most immediate enforcement.

- AI compliance maturity now signals operational readiness, not just regulatory awareness.

Expanded Insights

Enterprise AI Governance Sets the Ceiling

At the top of the stack sits enterprise AI governance. This is where organizations define who owns AI, how it is approved, how risk is escalated, and when humans must intervene. In 2026, AI compliance begins here because regulators increasingly expect organizations to demonstrate intent, not just controls. Internal AI policies, risk frameworks, and governance committees often determine whether downstream controls are applied consistently or selectively. AI compliance at this layer is uneven across industries, but it has become the clearest signal of overall AI maturity.

AI Specific Risk and Ethics Are Moving From Theory to Practice

The second domain focuses on AI specific risk, including bias, robustness, and unintended outcomes. While ethical AI discussions were once aspirational, AI compliance in 2026 increasingly requires evidence of monitoring and accountability. Emerging standards and regulatory guidance are shaping expectations around explainability and human oversight, particularly for systems that influence people, safety, or material outcomes. This domain remains less enforced than others, but scrutiny is rising quickly as AI systems scale beyond experimentation.

Security and Operational Resilience Are No Longer Optional

Security has become one of the most enforceable pillars of AI compliance. AI systems expand the attack surface through training data, prompts, models, APIs, and vendor dependencies. In 2026, AI compliance in this domain focuses on protecting not just infrastructure, but also model behavior and data leakage. Enterprise IT and security teams now treat AI pipelines as production systems subject to the same controls as core platforms. This is one of the few areas where enforcement is already strong and growing.

System Validation and Controls Anchor Regulated Industries

For regulated sectors, especially life sciences and public companies, AI compliance hinges on validation and controls. Systems that generate regulated records, influence manufacturing or quality decisions, or touch financial reporting must be demonstrably reliable. Traditional validation frameworks are being stretched to accommodate machine learning and adaptive systems, but the underlying expectations have not changed. AI compliance here is less about innovation and more about discipline, traceability, and consistency.

Data Integrity and Privacy Remain the Foundation

At the base of the stack sits data integrity and privacy. Every AI compliance conversation eventually returns to data: where it came from, how it was transformed, and whether it can be trusted. In 2026, privacy regulations continue to shape what data can be used for training and inference, while data integrity principles determine whether AI outputs are defensible. Without strong controls at this layer, compliance efforts higher up the stack tend to collapse under audit or regulatory review.

Why This Layered View Matters

The most important shift in AI compliance is conceptual. Organizations are moving away from asking which regulation applies, and toward understanding how multiple domains interact. AI compliance is no longer solved by passing one audit or adopting one framework. It is solved by building a governance stack that aligns industry expectations, operational reality, and risk tolerance.

In 2026, the organizations that scale AI successfully are not those that avoid regulation, but those that design for AI compliance from the start.